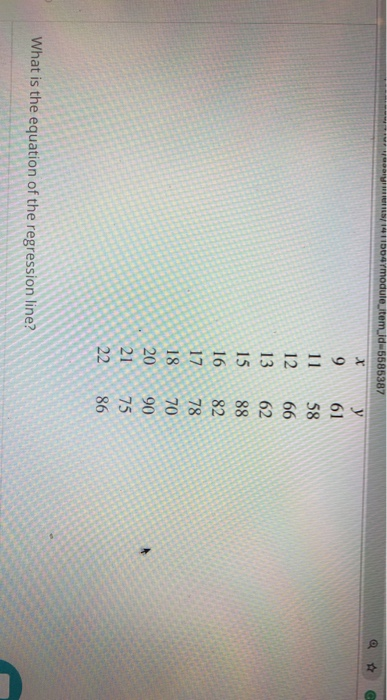

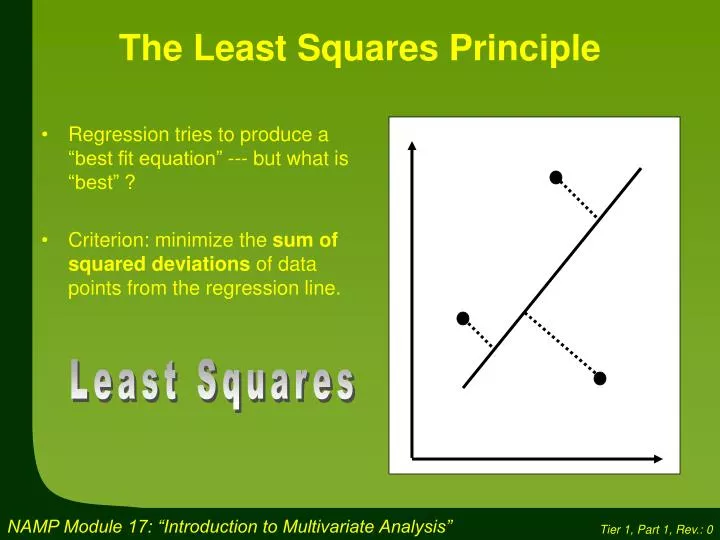

Uncertainties in each parameter value were returned by the fitting algorithm in SciDAVis (version 1.D013), a regression analysis program vetted for accuracy in a previous study, having been computed by standard methods. From the data collected, relative errors and uncertainties were then computed. Any given value of a condition was tested alongside all possible combinations of values for other conditions once to ensure testing of the hypothesis over a number of parameter values and conditions representative of a common range of experimental conditions. Examination of conditions indicates that the proposed law has potential in select cases, but due to ambiguity in the conditions which favor one model over the other, an approach similar to the one in this study is encouraged for determining which model will offer reduced errors and uncertainties in data sets where additional accuracy is desired.Ī total of 180 data sets were analyzed to cover all parameter combinations described previously. In most remaining cases, the traditional model provided smaller errors or uncertainties. For the scaling parameter, the proposed model provided smaller errors in 96/180 cases and smaller uncertainties in 88/180 cases. These data sets were then analyzed for both forms of the power law. Data sets with known values of scaling parameter and exponent were generated by adding normally distributed random errors with controlled mean and standard deviations to underlying power laws. This study extends previous work by testing each model for a range of parameters. However, the proposed model replaces the lead coefficient with a scaling parameter and reduces uncertainties in best-fit parameters for data sets with exponents close to 3. When regression analysis is performed on data sets modeled by a power law, the traditional model uses a lead coefficient. Opens another door to deep insight.Models based on a power law are prevalent in many areas of study. Why does a coefficient like a or b become so important? Why and how is a coefficient so prominently related to error? How does it affect anything? In summary, step back from the the linear regression case, and look at this example as a problem in calculus. The sum of the squares of the errors takes the minimum value of $4677.2$ when $\mu = 42.4$. Notice that the sum of the squares of the errors is not $0$. Hopefully, this panel illustrates why you are looking for $0$s of the first derivative. The right panel shows equation $(1)$ and how it varies with $\mu$.

The figure on the left shows the scores for students $1-5$, with the average a dashed line. Furthermore, you have specified that you want the best fit with respect to the $l_ = 42.4 You want to find the parameters for a model which best describes the data. I understand dS/da is equated to zero to mean that we want the sum ofĮrrors to tend to zero, but how does equating it actually make it.

Why does a coefficient like a or b become so important? Why and how is a coefficient so prominently related to error? How does it affect anything?.But for curve fitting, what does it mean to say dS/da and dS/db? WhatĪre we actually measuring when we say rate of change of a summation.We would take the derivative of the slope to find the rate of change of slope at any instant (although more correctly, it'd be rate of change of y wrt x at any instant). In y=mx c, the coefficient is m, and that's the slope. I don't understand why we take a derivative of the coefficient. To minimize the error, we take the derivative with the coefficients a and b and equate it to zero. Then we substitute as S = summation((Yi - yi)^2) = summation((Yi - (axi b))^2). The next step is to take the sum of the squares of the error: S = e1^2 e2^2 etc. When trying to fit a straight line to a bunch of data points, if we use y=ax b, then the error e at every data point will be e = Y - y, where Y is the data point's position on the y axis.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed